Hello,

I'm trying to make a PBR vulkan renderer and I wanted to implement Spherical harmonics for the irradiance part (and maybe PRT in the future but that's another story).

the evaluation on the shader side seems okay (it look good if I hardcode the SH directly in the shader) but when I try to generate it from a .hdr map it output only gray scale.

It's been 3 days I'm trying to debug now I just have no clue why all my colour coefficients are gray.

Here is the generation code:

SH2 ProjectOntoSH9(const glm::vec3& dir) {

SH2 sh;

// Band 0

sh.coef0.x = 0.282095f;

// Band 1

sh.coef1.x = 0.488603f * dir.y;

sh.coef2.x = 0.488603f * dir.z;

sh.coef3.x = 0.488603f * dir.x;

// Band 2

sh.coef4.x = 1.092548f * dir.x * dir.y;

sh.coef5.x = 1.092548f * dir.y * dir.z;

sh.coef6.x = 0.315392f * (3.0f * dir.z * dir.z - 1.0f);

sh.coef7.x = 1.092548f * dir.x * dir.z;

sh.coef8.x = 0.546274f * (dir.x * dir.x - dir.y * dir.y);

return sh;

}

SH2 ProjectOntoSH9Color(const glm::vec3& dir, const glm::vec3& color) {

SH2 sh = ProjectOntoSH9(dir);

SH2 shColor;

shColor.coef0 = color * sh.coef0.x;

shColor.coef1 = color * sh.coef1.x;

shColor.coef2 = color * sh.coef2.x;

shColor.coef3 = color * sh.coef3.x;

shColor.coef4 = color * sh.coef4.x;

shColor.coef5 = color * sh.coef5.x;

shColor.coef6 = color * sh.coef6.x;

shColor.coef7 = color * sh.coef7.x;

shColor.coef8 = color * sh.coef8.x;

return shColor;

}

void SHprojectHDRImage(const float* pixels, glm::ivec3 size, SH2& out) {

double pixel_area = (2.0f * M_PI / size.x) * (M_PI / size.y);

glm::vec3 color;

float weightSum = 0.0f;

for (unsigned int t = 0; t < size.y; t++) {

float theta = M_PI * (t + 0.5f) / size.y;

float weight = pixel_area * sin(theta);

for (unsigned int p = 0; p < size.x; p++) {

float phi = 2.0 * M_PI * (p + 0.5) / size.x;

color = glm::make_vec3(&pixels[t * size.x + p]);

glm::vec3 dir(sin(phi) * cos(theta), sin(phi) * sin(theta), cos(theta));

out += ProjectOntoSH9Color(dir, color) * weight;

weightSum += weight;

}

}

out.print();

out *= (4.0f * M_PI) / weightSum;

}

outside of the SHProjectHDRImage function that's pretty much the code from MJP that you can check here:

https://github.com/TheRealMJP/LowResRendering/blob/2f5742f04ab869fef5783a7c6837c38aefe008c3/SampleFramework11/v1.01/Graphics/SH.cpp

I'm not doing anything fancy in term of math or code but I that's my first time with those so I feel like I forgot something important.

basically for every pixel on my equi-rectangular hdr map I generate a direction, get the colour and project it on the SH

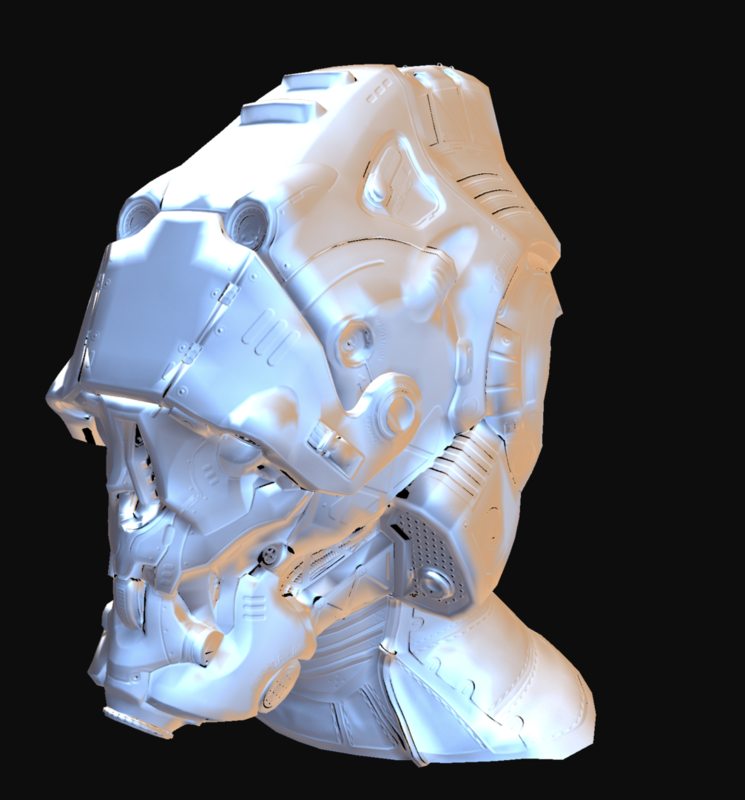

but strangely I endup with a SH looking like this:

coef0: 1.42326 1.42326 1.42326

coef1: -0.0727784 -0.0727848 -0.0727895

coef2: -0.154357 -0.154357 -0.154356

coef3: 0.0538229 0.0537928 0.0537615

coef4: -0.0914876 -0.0914385 -0.0913899

coef5: 0.0482638 0.0482385 0.0482151

coef6: 0.0531449 0.0531443 0.0531443

coef7: -0.134459 -0.134402 -0.134345

coef8: -0.413949 -0.413989 -0.414021

with the HDR map "Ditch River" from this web page http://www.hdrlabs.com/sibl/archive.html

but I also get grayscale on the 6 other hdr maps I tried from hdr heaven, it's just different gray.

If anyone have any clue that would be really welcome.